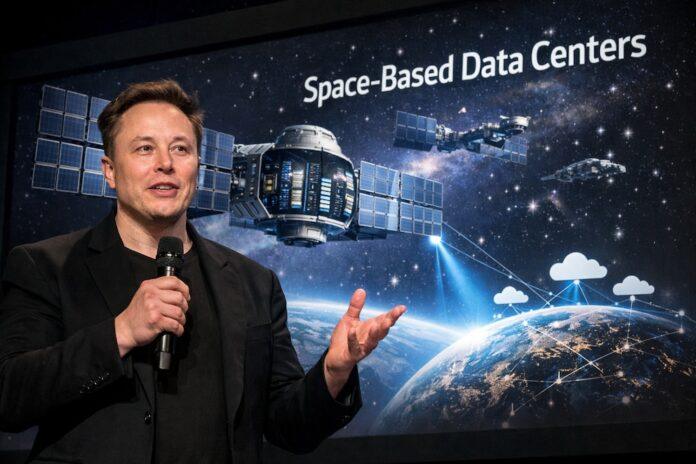

Elon Muskannounced his intention to deploy the first artificial intelligence (AI) data centers in space over the next 36 months.

The businessman maintains that low Earth orbit offers energy and scalability advantages that are impossible to match on Earth.The plan is based on the efficiency of solar panels outside the atmosphere and the growing demand for processing for AI systems.

Solar energy in space, Musk indicated in Dwarkesh Patel’s Cheeky Pint podcast, can be up to five times more efficient than its use on the Earth’s surface.

The%20businessman%20underlines%C3%B3%20that,%20in%20the%20%C3%B3low%20orbit,%20the%20solar%20panels%20receive%20constant light,%20without%20cycles%20of%20d%C3%ADa%20and%20night,%20seasonality,%20clouds%20ni%20atm%C3%B3sphere,factors%20that%20on%20the%20Earth%20generate%20a%20p%C3%A9loss%20energ%C3%A9approximate%20%20of%2030%.This%20environment%20allow%C3%ADa%20operate%20%20%20data%20centers%20without%20depending%20on%20battery%C3%ADas%20nor heavy%20structures,%20what%20%20reduce%C3%ADa%20the%20cost%20of%20manufacturing%C3%B3n%20and%20facilitate%C3%ADa%20deployment%20of%20infrastructures%20technology%C3%B3gic%20in%20%20space.

Musk plans to put a terawatt of GPUs into orbit, powered entirely by solar energy, to meet the computational demand of artificial intelligence.According to the businessman, space is the only place where this type of infrastructure can really be scaled, and energy availability eliminates one of the great obstacles that terrestrial data centers face in the face of the progressive sophistication of AI models.

The businessman also explained that solar panels designed for space can be manufactured without the need for glass or batteries, which further reduces weight and associated costs.Musk stated that space will be the most economically attractive place to implement AI and that the materialization of this plan will take place in less than three years.

The deployment of data centers in orbit raises questions about the maintenance of systems, particularly GPUs, the replacement of which is not feasible in the space environment.

Musk minimized the importance of this technical challenge and maintained that, once the initial chip debugging phase is over, these components reach high reliability standards.“I don’t think maintenance will be a problem,” he assured, and also mentioned the possibility of using chips developed by Nvidia, Tesla AI6, TPU or Trainiums.

The project contemplates the launch of up to one million satellites to establish a network of space data centers aimed at enhancing the capabilities of artificial intelligence.These satellites would function as interconnected nodes and power AI systems that require high processing capacity, using solar energy as the main source.

The plan has already motivated efforts before the Federal Communications Commission (FCC) of the United States in search of the necessary authorizations.The initiative arises after the recent acquisition of xAI by SpaceX, an operation that reinforces the integration between the aerospace sector and artificial intelligence in the magnate’s business conglomerate.

Designing and operating data centers in space will require overcoming engineering challenges linked to radiation and bandwidth to transfer data between Earth and orbit.Musk pointed out that the biggest current challenge is in the availability of turbines for cooling systems, relegating to the background the possible inconveniences derived from operating hardware in space.

The ultimate goal is to meet the growing demand for AI processing in an efficient and sustainable way, taking advantage of the unique advantages of the space environment.Elon Musk’s proposal marks a new milestone in the convergence between the space industry and artificial intelligence and could transform the future of large-scale computing.